The US Needs an Open Source AI Intervention to Beat China

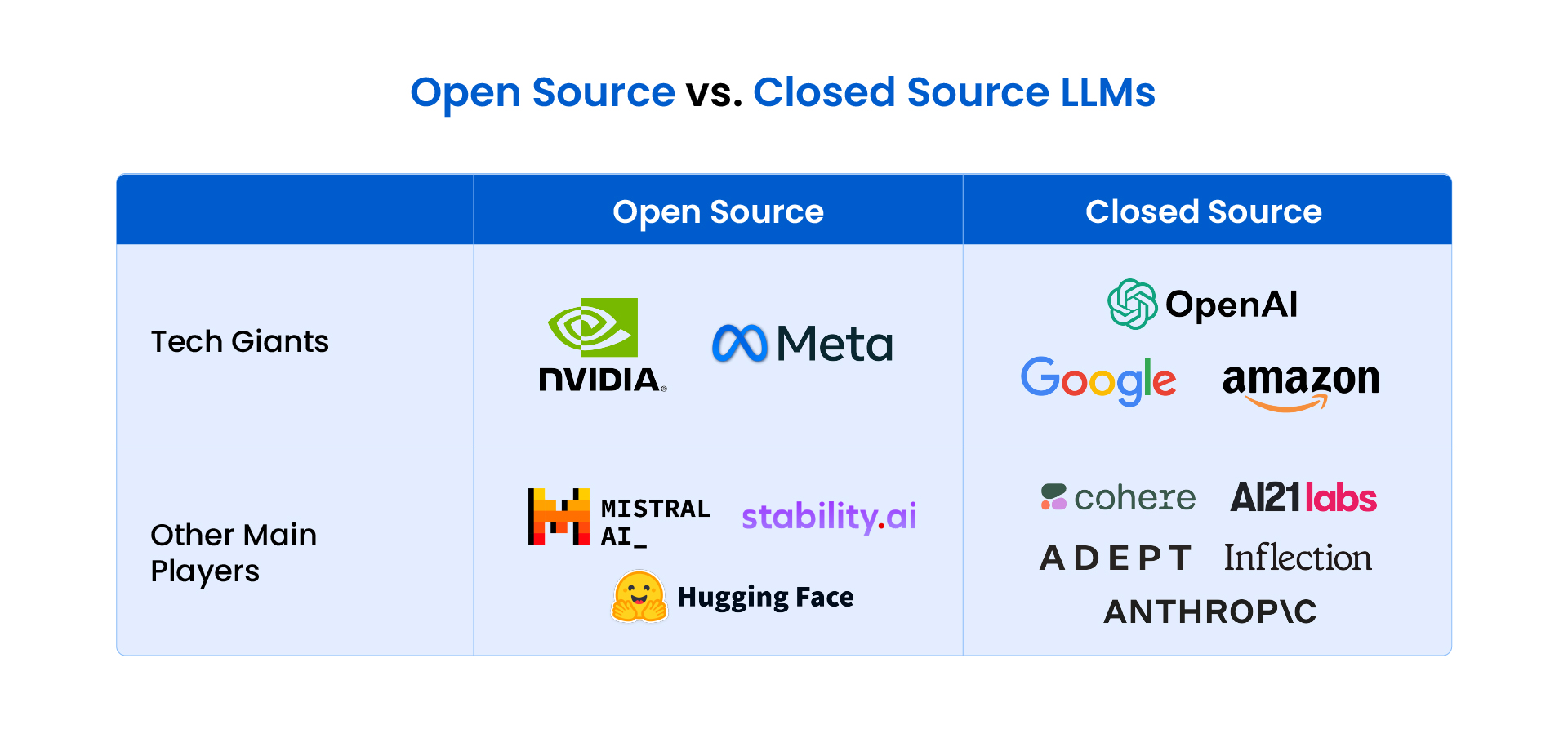

Since 2022, America has undeniably held a commanding lead in the rapidly evolving field of artificial intelligence. This advantage has been largely attributed to the groundbreaking work of high-flying companies such as OpenAI, Google DeepMind, Anthropic, and xAI, which have developed advanced, proprietary AI models. These models have set benchmarks for performance and capability, pushing the boundaries of what AI can achieve. However, a growing chorus of experts and researchers is now voicing significant concern that the United States is beginning to cede its competitive edge, particularly in the critical domain of open-weight AI models—those that can be freely downloaded, adapted, and run locally by anyone. This shift, they argue, poses a serious threat to America’s long-term dominance in AI.

The alarm bells are ringing louder as open models originating from Chinese companies, including Kimi, Z.ai, Alibaba, and DeepSeek, are experiencing a surge in popularity among researchers and engineers across the globe. These models are not merely catching up; they are, in many instances, surpassing their American counterparts in terms of accessibility, adaptability, and the robust developer support they offer. Consequently, the US finds itself in the uncomfortable position of a laggard in an increasingly vital area of AI innovation. Nathan Lambert, founder of the ATOM (American Truly Open Models) Project, succinctly captures the urgency of the situation, telling WIRED, "The US needs open models to cement its lead at every level of the AI stack." His assertion highlights a fundamental strategic imperative that America risks overlooking.

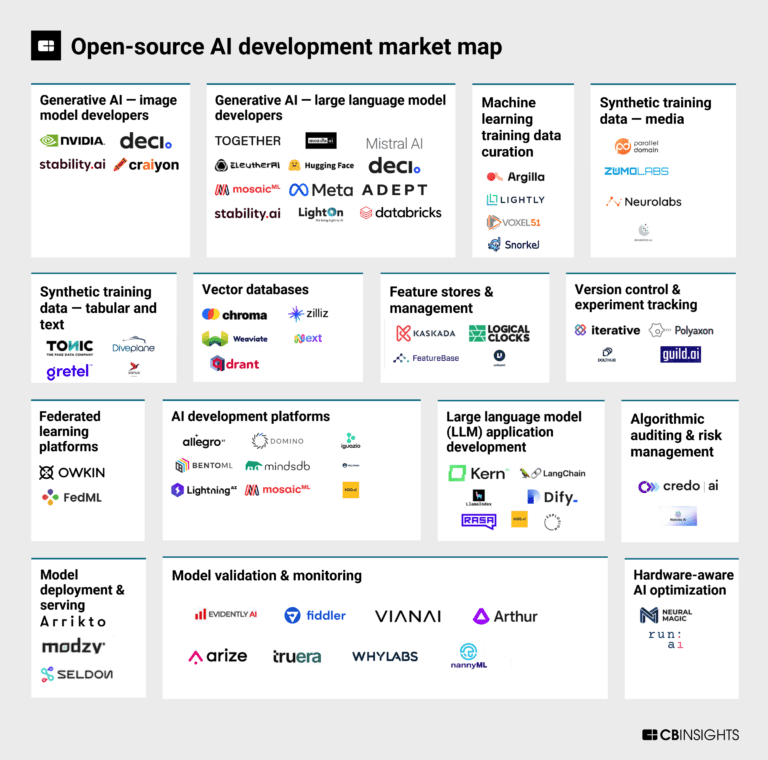

The current landscape of advanced AI models from leading US companies presents a stark contrast to the burgeoning open-source movement. Typically, these cutting-edge American models are accessible only through a restrictive chatbot interface or by sending queries to company servers via an Application Programming Interface (API). While some US giants like OpenAI and Google have released open-weight models, these versions are often significantly less capable than their proprietary counterparts and fall short when compared to the offerings from China. Chinese open-weight models, in contrast, are often better suited for modification, come with extensive developer support, and, crucially, benefit from a virtuous cycle: by open-sourcing their models, Chinese makers allow external researchers to contribute the best ideas and tweaks, which are then integrated into future releases, accelerating innovation and improving model performance at a rapid pace. This collaborative ecosystem empowers a broader community, fostering collective intelligence and accelerating the pace of development far beyond what a single proprietary entity can achieve.

Lambert, who also serves as a researcher at the Allen Institute for AI (Ai2), a prominent nonprofit in Seattle, Washington, established the ATOM Project with the explicit goal of illuminating the profound risks associated with the US falling behind in the open-source arena. He stresses that the country’s need for cutting-edge open models is multifaceted. Firstly, an over-reliance on foreign open-source models could prove disastrous if those models were suddenly discontinued, had their licenses changed to closed-source, or became subject to geopolitical restrictions. Such a scenario could leave American businesses, researchers, and government agencies vulnerable, disrupting critical operations and stifling innovation. Secondly, open models inherently foster a vibrant environment of innovation and experimentation, particularly among startups and independent researchers. They lower the barrier to entry, allowing smaller entities to build upon advanced foundations without the prohibitive costs or restrictive access associated with proprietary systems. This democratization of AI technology is crucial for maintaining a diverse and dynamic tech ecosystem.

Beyond innovation and accessibility, open models address a critical security concern: the handling of sensitive information. Companies and organizations dealing with proprietary, classified, or personally identifiable data require AI models that can be run securely on their own hardware, within their own controlled environments. This level of autonomy and data sovereignty is often impossible with API-based proprietary models, where data must be transmitted to external servers. "Open models are a fundamental piece of AI research, diffusion, and innovation, and the US should play an active role leading rather than following other contributors," Lambert asserts, underscoring the strategic importance of this domain for national security and economic competitiveness. The ATOM Project, launched on July 4th, serves as a comprehensive platform, presenting a compelling argument for greater openness and meticulously demonstrating how Chinese open-weight models have systematically overtaken US counterparts in recent years.

Ironically, the global open-source AI movement itself was ignited by an American tech titan: Meta. In July 2023, Meta made waves by releasing Llama, an open-weight frontier model, marking a strategic move to reassert its presence in the burgeoning AI race. Llama’s release was a watershed moment, democratizing access to powerful AI capabilities that had previously been the exclusive domain of a few well-funded labs. It quickly became incredibly popular among researchers and entrepreneurs, sparking a flurry of innovation and new applications worldwide. Its success demonstrated the immense power of open collaboration and community-driven development in pushing the boundaries of AI.

However, since that pivotal moment, Meta and many other leading US AI companies appear to have shifted their focus. The prevailing narrative within these organizations has become increasingly fixated on the ambitious and often speculative goal of developing human or superhuman-level Artificial General Intelligence (AGI), ideally before their competitors. This intense, competitive pursuit of AGI has inadvertently led to a noticeable decrease in the companies’ commitment to openness. Mark Zuckerberg, Meta’s CEO, for instance, has rebooted the company’s AI efforts with a string of expensive hires and the establishment of a new "superintelligence" lab, signaling a significant investment in this high-stakes race. More tellingly, Zuckerberg has indicated that Meta may no longer open-source its best and most advanced models, a move that starkly contrasts with the company’s earlier pioneering role in the open-source movement and risks reversing the democratization of AI.

In stark contrast to this increasingly closed approach in the US, China’s tech industry has, over the past year, demonstrably veered towards greater openness. A prime example of this strategic pivot came in January 2025, when DeepSeek, a then relatively unknown startup, unveiled an open model called DeepSeek-R1. This model sent shockwaves through the global AI community due to its advanced capabilities and, perhaps more remarkably, the fact that it was trained at a mere fraction of the cost associated with major US models. This demonstrated a potent combination of efficiency and effectiveness that challenged established notions of AI development. Since then, numerous other Chinese companies have followed suit, introducing powerful open-weight models that feature additional innovations, robust performance, and strong community support, further solidifying China’s position as a leader in the open-source AI landscape.

Some leading AI researchers contend that for the US to truly regain a competitive advantage and secure its long-term leadership in AI, it must embrace even more radical forms of openness. Percy Liang, a distinguished computer scientist at Stanford University and a signatory of an open letter supporting the ATOM Project, points out a crucial distinction: while most "open models" in both the US and China are "open-weight," they often lack the complete transparency of fully open models, primarily because the underlying training data can still be kept secret. This opacity can obscure biases, hinder reproducibility, and limit deeper scientific understanding. Liang is spearheading an initiative to address this very issue with Marin, a large language model meticulously trained exclusively on openly available data. This ambitious project, backed by significant funding from Google, Open Athena, and Schmidt Sciences, aims to set a new standard for transparency in AI development, ensuring that every aspect of the model’s creation is open to scrutiny and improvement.

Liang is also critical of the pervasive hype surrounding AGI, which he believes has largely been unhelpful and perhaps even detrimental to the broader progress of AI. "The view that we would get one company to build AGI and then bestow it on everyone is a little bit misguided," he argues, highlighting the dangers of centralizing such immense power and control. He firmly believes that the US government may need to play a more active role in promoting greater openness, potentially through funding initiatives, regulatory frameworks, or by fostering collaborative research environments. Liang adds that empowering a larger cohort of researchers to understand, build, and adapt AI models will inevitably lead to a healthier, more resilient, and more innovative tech ecosystem. "This is, I think, existential for many companies," he warns, drawing a historical parallel: "We know from history what happens with monopolies." The potential for a few dominant players to control the future of AI poses significant risks to economic fairness, innovation, and democratic principles.

Further echoing the call for radical approaches, Andrew Trask, CEO of OpenMined, a company at the forefront of developing "federated" approaches to AI training, recently advocated for a groundbreaking government effort. Trask proposed a national initiative to help companies access vast quantities of nonpublic training data, drawing a parallel to ARPANET, the DOD-backed network that ultimately laid the foundation for the internet. He argues that unlocking access to this trove of data, currently siloed within various organizations, could be absolutely crucial for researchers to make future monumental leaps in AI capabilities. In this particular domain, China might possess a distinct advantage, given its government’s capacity to mandate data sharing among companies and with model builders. Trask highlights the sheer scale of untapped potential: "There’s something like 180 zettabytes of data out there." To put this into perspective, today’s most powerful AI models are typically trained with several hundred terabytes of data, yet one zettabyte is equivalent to a staggering billion terabytes. Tapping into even a fraction of this latent data could revolutionize AI development.

Despite the prevailing challenges, Lambert remains optimistic about the feasibility and cost-effectiveness of a US-led open-source AI intervention. He notes that some companies are beginning to express interest in backing efforts to build open-weight frontier models. "The most important thing here is how cheap it would be for the US to compete with these Chinese open models," Lambert emphasizes. The ATOM Project, through meticulous analysis, estimates that the annual cost to build and sustain a frontier open-source AI model would be approximately $100 million.

This figure, when viewed in the broader context of the AI world, is remarkably modest. To illustrate the point, Lambert highlights a telling detail: $100 million is roughly what Mark Zuckerberg reportedly offered to individual AI researchers to entice them to join Meta’s new superintelligence endeavor. The stark contrast between the cost of an entire national open-source initiative and the recruitment budget for a handful of top individual talents underscores the incredible value proposition that open-source AI presents. Investing a relatively small sum could yield disproportionately large returns in terms of national innovation, security, and long-term leadership in the global AI race, providing a sustainable and collaborative path forward rather than a hyper-competitive, proprietary one. The choice, it seems, is clear: embrace openness or risk being left behind.