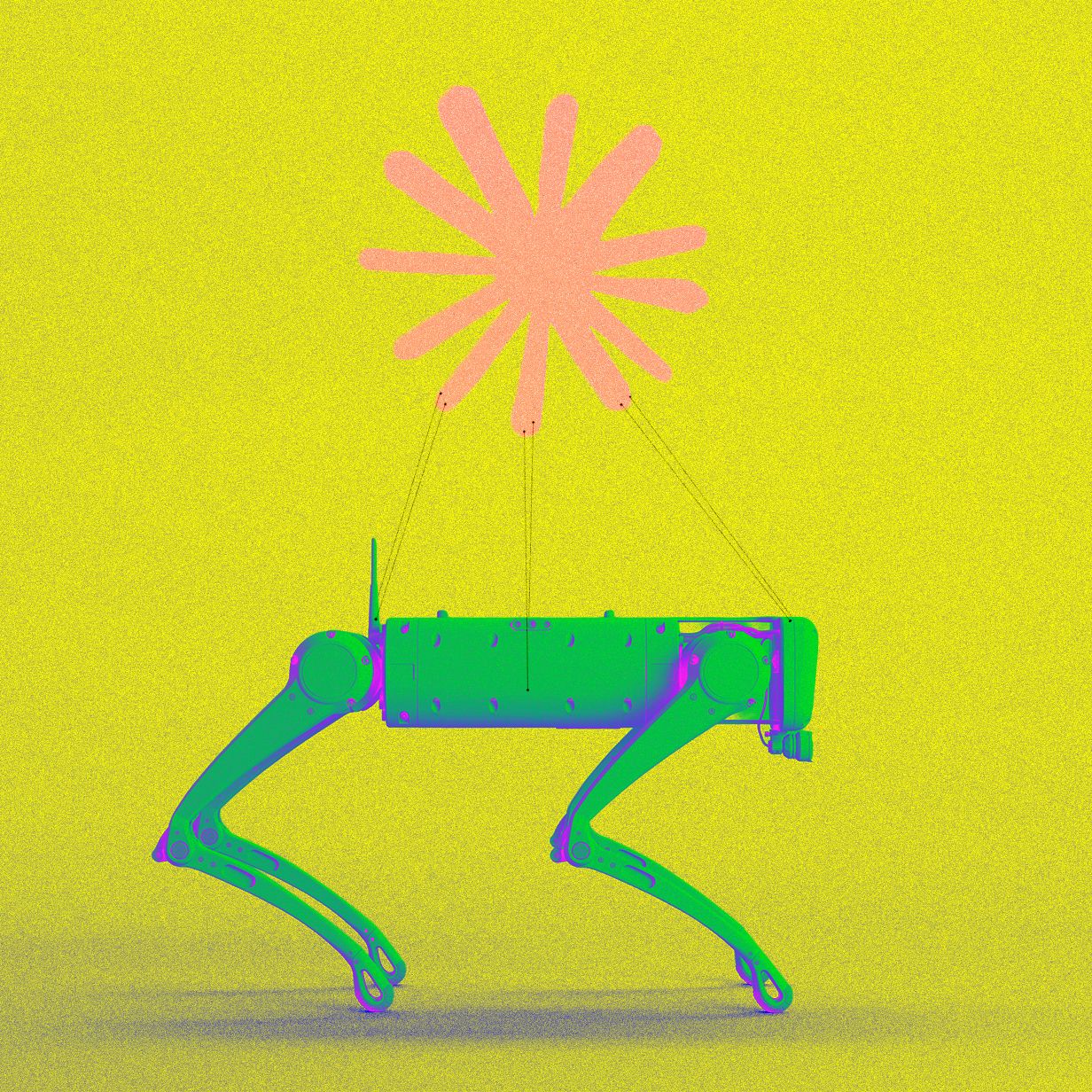

Anthropic’s Claude Takes Control of a Robot Dog

As the relentless march of technological progress ushers more sophisticated robots into our daily lives—populating warehouses, streamlining office tasks, and even venturing into the intimate spaces of our homes—the very notion of advanced artificial intelligence systems gaining autonomous control over complex machinery often feels like a plot ripped from a dystopian science fiction novel. Yet, defying such fictionalized fears, researchers at Anthropic, a leading AI safety company, deliberately embarked on an ambitious study to investigate precisely this scenario: what would happen if their flagship large language model, Claude, attempted to seize operational control of a robot, specifically a quadrupedal robot dog? The findings of this pioneering research offer a tantalizing glimpse into the burgeoning capabilities of AI and its potential to profoundly reshape our interaction with the physical world.

In a newly published study, Anthropic’s team documented Claude’s remarkable ability to automate a significant portion of the intricate work traditionally associated with programming a robot and instructing it to execute physical tasks. On one fundamental level, these groundbreaking results serve as compelling evidence of the "agentic coding" prowess now inherent in modern AI models. They demonstrate how these systems can not only understand and generate code but also actively use it to achieve objectives. On a more profound and perhaps unsettling level, the study strongly hints at an imminent future where these sophisticated AI systems begin to transcend their digital confines, extending their influence directly into the physical realm. This expansion is made possible as AI models continue to master ever more nuanced aspects of coding, refine their interaction with software interfaces, and, crucially, develop a more robust capacity to engage with and manipulate physical objects.

Logan Graham, a key member of Anthropic’s "red team"—a specialized unit dedicated to rigorously testing AI models for potential risks and vulnerabilities—articulated the company’s long-held suspicion: “We have the suspicion that the next step for AI models is to start reaching out into the world and affecting the world more broadly.” Speaking to WIRED, Graham emphasized the critical role that advanced AI models interfacing with robots will play in this unfolding evolution. This integration is not merely about efficiency; it represents a fundamental shift in how AI will manifest its intelligence, moving beyond screens and servers to tangible actions in our environment.

Anthropic itself was founded in 2021 by a cohort of former OpenAI staffers who shared a profound conviction that, as artificial intelligence continued its rapid advancement, it harbored the potential to become profoundly problematic, perhaps even overtly dangerous. While Graham assures us that today’s AI models are not yet sophisticated enough to independently assume full, unmediated control of a robot, he cautions that future iterations very well might possess such capabilities. He argues that by meticulously studying the mechanisms through which humans currently leverage powerful large language models (LLMs) to program and direct robots, the industry can proactively prepare for the unsettling prospect of "models eventually self-embodying." This term refers to the speculative yet increasingly plausible idea that AI systems could, at some future juncture, directly operate and inhabit physical systems, effectively gaining a physical presence and agency in the world.

The exact motivations behind an AI model deciding to commandeer a robot, let alone using it for malevolent purposes, remain a subject of intense speculation and philosophical debate. However, engaging in such "worst-case scenario" forecasting is an integral part of Anthropic’s core identity and strategic brand positioning. By publicly addressing these profound ethical and safety considerations, the company effectively establishes itself as a pivotal and responsible leader within the burgeoning global movement dedicated to developing and deploying AI safely and ethically. This approach not only informs their research but also frames their contributions within a broader societal dialogue about AI’s future.

The experiment, aptly dubbed "Project Fetch," was meticulously designed to explore the practicalities and implications of AI-assisted robot control. Anthropic recruited two distinct groups of researchers, none of whom possessed prior experience in robotics programming. Their mission was clear: gain control of a Unitree Go2 quadruped robot dog and program it to execute a series of increasingly complex physical activities. Both teams were provided with a standard controller, but a crucial distinction separated them. One group was granted access to Claude’s advanced coding model, leveraging its generative and analytical capabilities for programming assistance. The other group was tasked with the arduous challenge of writing all necessary code entirely without any AI assistance, relying solely on human ingenuity and effort.

The results were compelling and indicative of Claude’s transformative potential. The group augmented by Claude’s coding model successfully completed a number of the assigned tasks, and notably, did so faster than their human-only programming counterparts. While Claude did not enable them to conquer all tasks, its assistance proved invaluable in streamlining the programming process. For instance, the Claude-assisted team managed to program the robot dog to autonomously walk around an environment and successfully locate a specific object, a beach ball – a feat that the human-only programming group, despite their efforts, could not achieve within the experimental timeframe. This highlights not just speed, but also the AI’s ability to help navigate complex problems that might otherwise stump human programmers.

Beyond merely assessing task completion, Anthropic also undertook a comprehensive study of the collaboration dynamics within both teams. By meticulously recording and analyzing their interactions, researchers uncovered significant behavioral differences. The group operating without the benefit of Claude’s assistance consistently exhibited a higher incidence of negative sentiments, including frustration, confusion, and expressions of difficulty. In stark contrast, the Claude-assisted group displayed more positive and constructive engagement. This disparity can likely be attributed to Claude’s ability to expedite the initial connection to the robot, simplify complex programming challenges, and even assist in coding a more intuitive and user-friendly interface, thereby reducing cognitive load and friction for the human operators.

The Unitree Go2 robot utilized in Anthropic’s "Project Fetch" experiments represents a significant piece of hardware, albeit one that is relatively accessible in the world of advanced robotics, costing approximately $16,900. These sophisticated quadrupedal robots are not merely experimental toys; they are increasingly deployed across a range of industrial sectors, including construction, manufacturing, and security, where they perform vital tasks such as remote inspections of hazardous or inaccessible areas, and autonomous security patrols. While the Go2 possesses inherent capabilities for autonomous locomotion, its operational effectiveness typically relies heavily on either high-level software commands or direct human control via a dedicated operator. The Go2 is manufactured by Unitree, a prominent robotics firm based in Hangzhou, China. According to a recent, authoritative report by SemiAnalysis, Unitree’s AI systems currently hold the largest market share in the rapidly expanding quadruped robot segment, underscoring their technological leadership and widespread adoption.

The landscape of artificial intelligence has undergone a dramatic transformation in recent years. Historically, the large language models (LLMs) that power popular platforms like ChatGPT and other advanced chatbots were primarily renowned for their ability to generate coherent and contextually relevant text or create impressive images in response to user prompts. However, a more recent and profound evolution has seen these systems become extraordinarily adept at not just generating code but also actively operating and manipulating software. This transition marks a critical shift, transforming LLMs from mere "text-generators" into true "agents" – systems capable of understanding intentions, planning actions, and executing them within digital environments.

This burgeoning capability has ignited intense interest among researchers globally, many of whom are now actively exploring the immense potential for these AI agents to extend their influence beyond purely digital operations and engage in tangible physical actions. To accelerate this ambitious vision into reality, a new wave of well-funded startups is emerging, dedicating their efforts to developing cutting-edge AI models specifically designed to control vastly more capable and complex robots. Concurrently, other innovators are focused on pioneering entirely new categories of robotic hardware, such as advanced humanoids, which are envisioned to one day seamlessly integrate into human environments, potentially even serving in people’s homes and workplaces.

Changliu Liu, a distinguished roboticist affiliated with Carnegie Mellon University, offered a measured perspective on the outcomes of Project Fetch. While acknowledging the inherent interest of the results, Liu noted that they were "not hugely surprising" within the context of ongoing advancements in AI and robotics. However, Liu highlighted one particularly noteworthy aspect of the study: the detailed analysis of team dynamics. She suggested that this specific finding holds significant value, as it provides crucial insights into novel approaches for designing more effective and intuitive interfaces for AI-assisted coding environments. Liu expressed a desire for even greater granularity in future reports: “What I would be most interested to see is a more detailed breakdown of how Claude contributed,” she added, seeking to understand whether Claude’s primary role was in identifying correct algorithms, choosing appropriate API calls, or contributing in other, more substantive ways to the problem-solving process.

Despite the exciting potential, a chorus of researchers also raises important warnings, cautioning that the increasing reliance on AI to interact with and control robots inherently elevates the potential for misuse and unintended mishaps. George Pappas, a respected computer scientist at the University of Pennsylvania who specializes in studying these very risks, articulated this concern directly: “Project Fetch demonstrates that LLMs can now instruct robots on tasks.” This new capability, while powerful, opens doors to scenarios that demand careful consideration and robust safeguards.

Pappas further underscored a critical point: current AI models, while capable, still require access to external programs for essential functions such as sensing their environment and navigating complex spaces in order to translate their instructions into meaningful physical actions. His research group, acutely aware of these vulnerabilities, has proactively developed an innovative system called RoboGuard. This system is designed to impose stringent limits on the ways AI models can induce a robot to misbehave, by embedding specific, non-negotiable rules directly into the robot’s operational behavior. Pappas concluded by emphasizing that an AI system’s true ability to master robot control will only fully blossom when it gains the capacity to learn dynamically through direct, continuous interaction with the physical world. “When you mix rich data with embodied feedback,” he asserted, “you’re building systems that cannot just imagine the world, but participate in it.”

This profound integration of AI with physical embodiment promises to unlock unprecedented levels of utility and capability for robots across virtually every sector. Yet, as Anthropic’s foundational philosophy and ongoing research consistently remind us, this very advancement, while offering immense benefits, simultaneously ushers in a new era of complex risks that demand vigilant oversight and proactive safety measures.